3 Automated Testing Techniques, Part 2: Data-Flow Testing

When writing tests for some new functionality, our goal should be to write the minimum number of tests with the highest likelihood of finding defects. To do this, we need a repeatable, consistent approach to defining our tests that will result in a similar outcome and quality of tests across the development team.

In my previous post, I covered basis testing. Once we have completed our structured basis testing analysis, we move on to data-flow testing analysis.

This testing involves making sure we handle every combination of the usage of data within our programs. These combinations of data usage will often line up with the tests from structured basis testing. However, working through these combinations will often result in new tests being needed. The process involves identifying each time a variable is defined, where it is used, and then finding all the combinations that are not already covered in our test matrix.

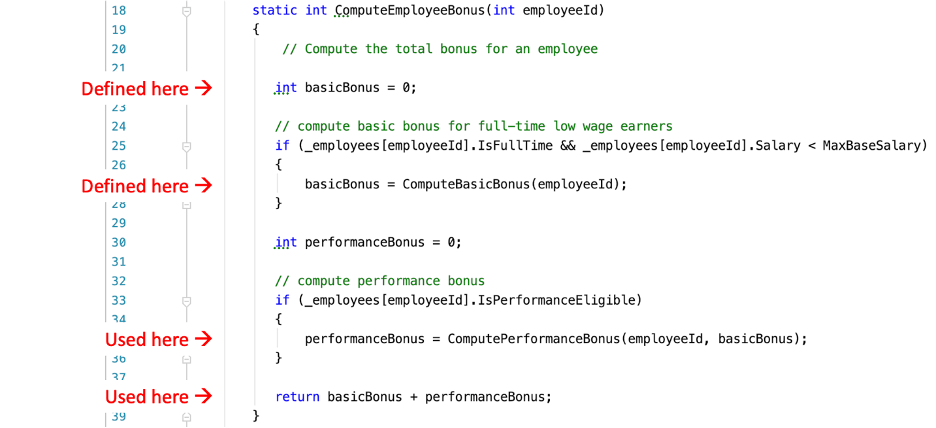

Here’s the analysis of the basicBonus variable in our method.

The following are the resulting combinations of defining and using the basicBonus variable:

- Defined on 22, first used on 35

- Covered by Test 2 and 3

- Defined on 22, first used on 38

- Not covered

- Defined on 27, first used on 35

- Covered by Test 1

- Defined on 27, first used on 38

- Covered by Test 4

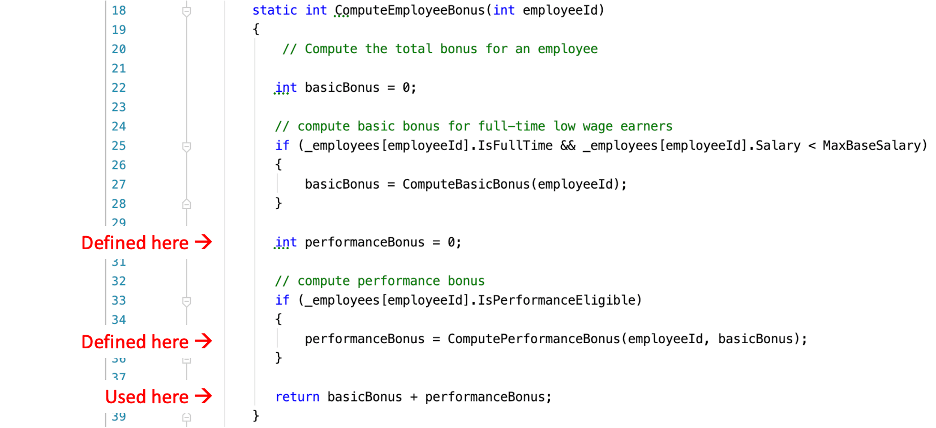

Here’s a similar analysis for the performanceBonus variable in our method.

The following are the resulting combinations of defining and using the performanceBonus variable:

- Defined on 30, first used on 38

- Covered by Test 1, 2, and 3

- Defined on 35, first used on 38

- Covered by Test 4

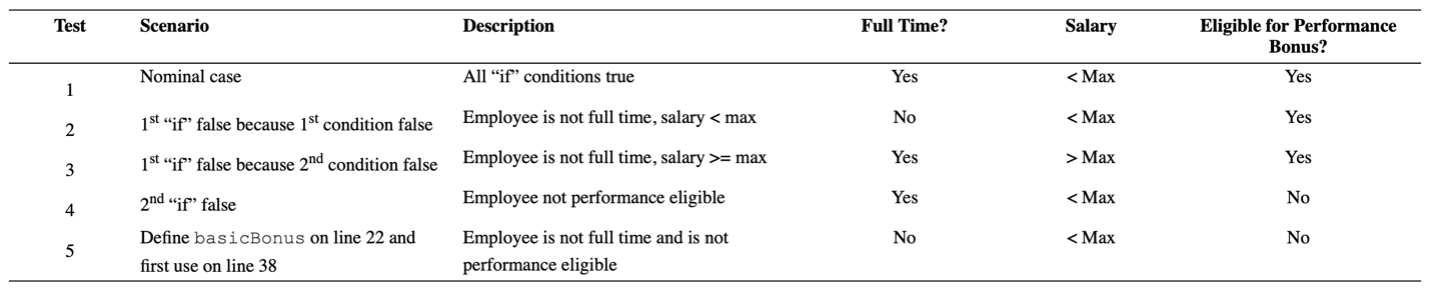

Our data-flow testing analysis uncovered a test case for the scenario where the basicBonus was defined on line 22 but not used until line 38. The conditions for this scenario would not be identified in structured basis testing, which is why using data-flow analysis is valuable.

Our test matrix with the additional entry for this scenario:

Now it’s time to move on to error guessing, which I will cover in my next post.